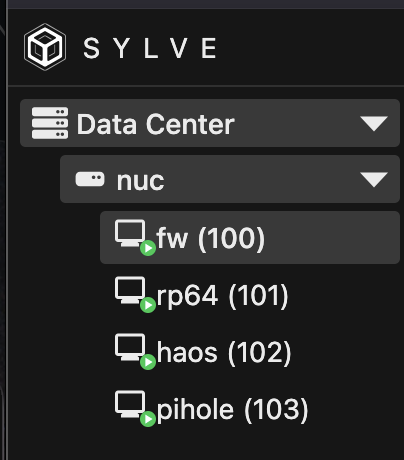

Bye Bye Proxmox, hello Sylve. This was "fun". Took longer than expected because of VLANs. I thought it was MikroTik's weird VLAN implementation fucking with me *again*, but I seem to finally have that covered after years of frustration. Seems to be a bug in the new if_rge, I had to disable hwvlantag on the interface for the tagged packets to arrive on the bridge interface. Migration of the VMs was easy thanks to zfs send/recv.

Post

@hayzam What is the reason Sylve creates zvols by default? I seem to recall a blog post that showed that zvols were considerably slower that having raw VM images on disk.

@flo The difference really is negligible at least anecdotally, like to the point where it's a rounding error I would say and this is after me testing probably 100s of VMs deployed on all kinds of systems

Also I'd be curious to read the blog you're mentioning and maybe run identical tests so we can find out if the latest versions have any difference to their conclusions

And I personally like the aesthetics of a ZFS volume (which is also why it's a default on Sylve), it's meant to be a block device rather than a file trying to masquerade as one, and by default we have tuned it for performance compared to proxmox, setting things like primary cache to metadata (which gave us 10-20% boost) etc.

@hayzam Interesting. Thanks for the explanation. I personally like zvols more, too and had been using them for most of my Bhyves, until I read said post. I don't recall exactly which one it was. I'm pretty sure it was one by Jim Salter. Could have been https://jrs-s.net/2018/03/13/zvol-vs-qcow2-with-kvm/ or https://klarasystems.com/articles/virtualization-showdown-freebsd-bhyve-linux-kvm/

I did some very basic testing of ZVOL vs raw disk performance on a single bhyve VM, and the results were pretty interesting.

Small but important note: the "raw disk" here was still a file on a ZFS dataset, so this may look very different on UFS or a genuinely different backing setup.

fio made the raw disk look slightly ahead: ZVOL did ~127 MiB/s / ~32.5k IOPS, while raw disk did ~144 MiB/s / ~36.9k IOPS.

But pgbench (postgres) told a very different story: ZVOL did ~1698 TPS at 9.3ms avg latency, while raw disk did ~423 TPS at 37.3ms avg latency.

`npm install` on the Sylve repo took roughly the same time on both media, down to the millisecond. I actually expected raw disk to be faster there since npm is random read/write heavy, but the ZVOL seems to be doing just fine.

Alpine VM shape: 4 vCPU, 4 GiB RAM, NVMe emulation, real NVMe underneath.

P.S: I did both the fio and pgbench tests 5 times and took the average, this was a brand new machine so no noisy neighbors/stuff running on the host either