This from Ingold (being alive 2011, pg. 62) reads as more optimistic than it used to. LLMs are closed rather than open, and can we really say its users aren't losing skill as "the ‘living appendages’ of lifeless mechanism"?

Post

@yaxu i wouldn't read that as optimistic, as he certainly agrees with some skills being lost. the sigaut quote especially shows this, some skills are sucessfully "automated", in the sense that they tend to do what people have done before well enough that corporations can make more profit with the machines rather than with people. but "along a line of resistance" other skills are developed.

re: open vs closed, i'd assume he's talking not about open-source or "malleability" or anything of the like. he's quoting gilbert simondon before this paragraph, who also wrote a lot about the milieu or environments of technics. so in that context open would imo mean something like "interacting with the real world". so the circular saw from the prev paragraph, that is supposed to be a closed machine, sawing wood perfectly the same time every time, is actually open, because the wood that it saws is "imperfect" and has variable density etc.

similarily LLMs are used by real people, run on physical hardware with electricity and water from real sources, etc. a closed technology would be one that can perfectly enclose all parameters of that skill which it aims to automate, which is just something that no machine can ever achieve, certainly not LLMs. i suppose the "imperfections" would in this case be the differences and subleties in human language/writing, the idea to model "reasoning" as a stochastic process in general, etc.

to come back to the skills question again, it would be too early to say what skills people are developing through use of LLMs. that there is some de-skilling taking place is undeniable, the question is just what skills will take those places.

@yaxu i see people describe LLMs as algorithms quite often but i'm pretty sure that's not the right word

i suppose they can *mimic* algorithms though

@sean_ae Yep I'd generally push back on LLMs being described as algorithms, if noone can see how they work there's no algorithm to talk about, it's just black box statistics. I think here though they are just talking about algorithms in terms of systems/machine-making in general, and LLMs fit that.

@yaxu @sean_ae @toxi re: specifically this idea of blackboxes and comprehensibility specifically: https://scholar.social/@olivia/116543457466890160

(not suggesting to reopen the conversation :), just thought this my be of interest)

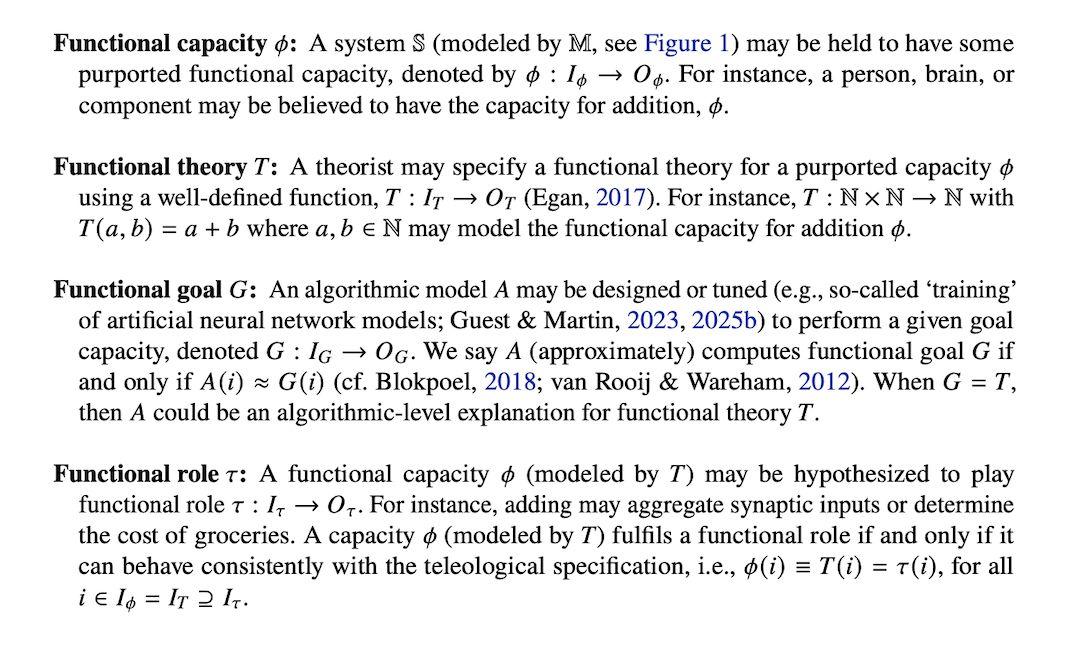

@sean_ae @emenel @yaxu it's a really short pdf so probably easier to read in your pdf application, but here's a screenshot and sorry myself for not being clear!

Guest, O., Blokpoel, M., & van Rooij, I. (2026). What the func? Multiple Realizability need not be Vague. Zenodo. https://doi.org/10.5281/zenodo.19388964

@yaxu @sean_ae tbh we could describe the function of an llm (or any "ai") in algorithmic terms, it's just a stochastic and fractal algorithm... so we can't trace the exact path of input to output, but we can describe in abstract detail what happens to the input to transform it into the output....

and yes, there was a lot of optimistic (naive tbh) writing about tech from a leftist perspective over the preceding 40 years :)