… okay, I didn't expect Mastodon to speed up the playback of attached GIFs 🙈

Post

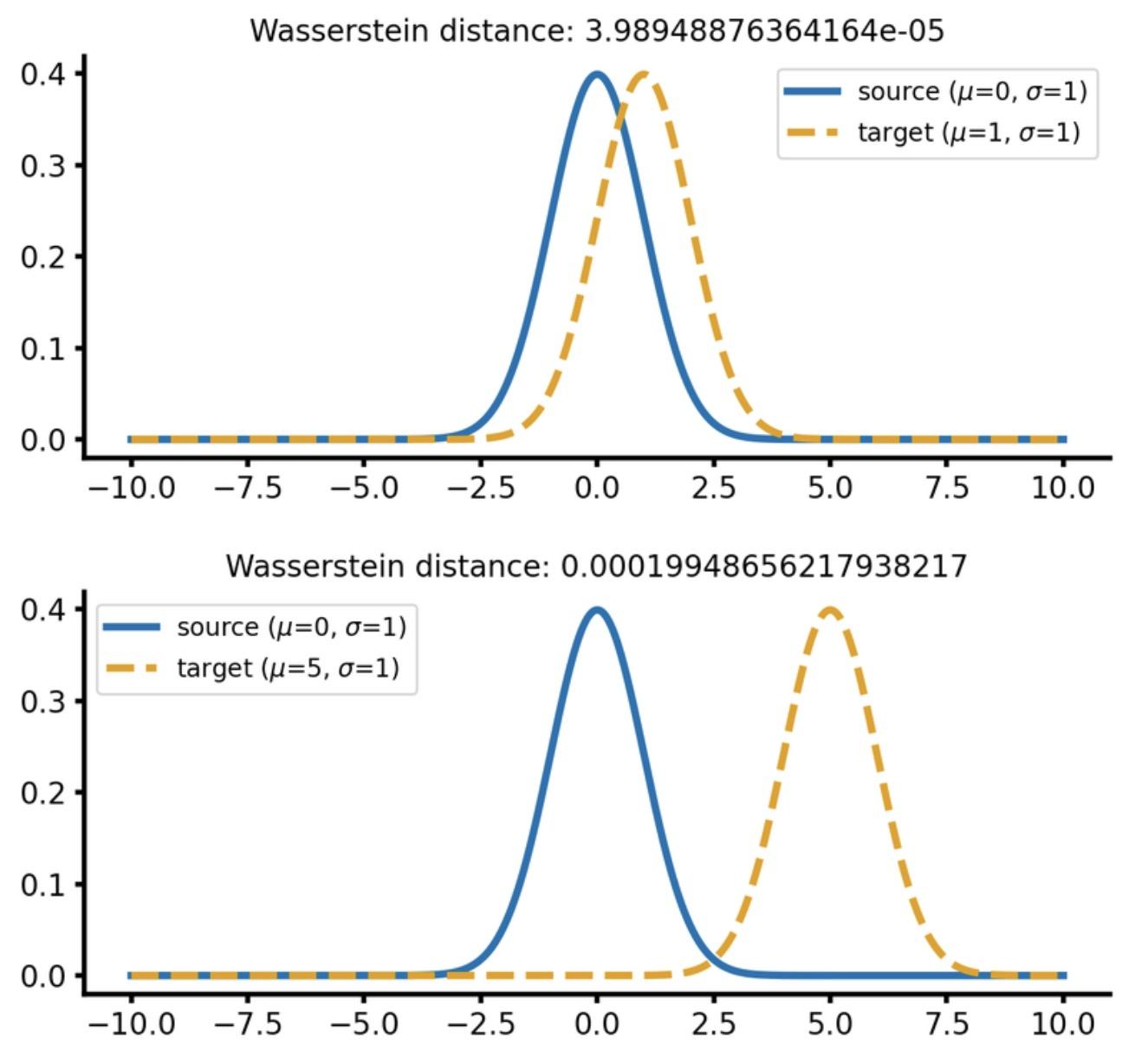

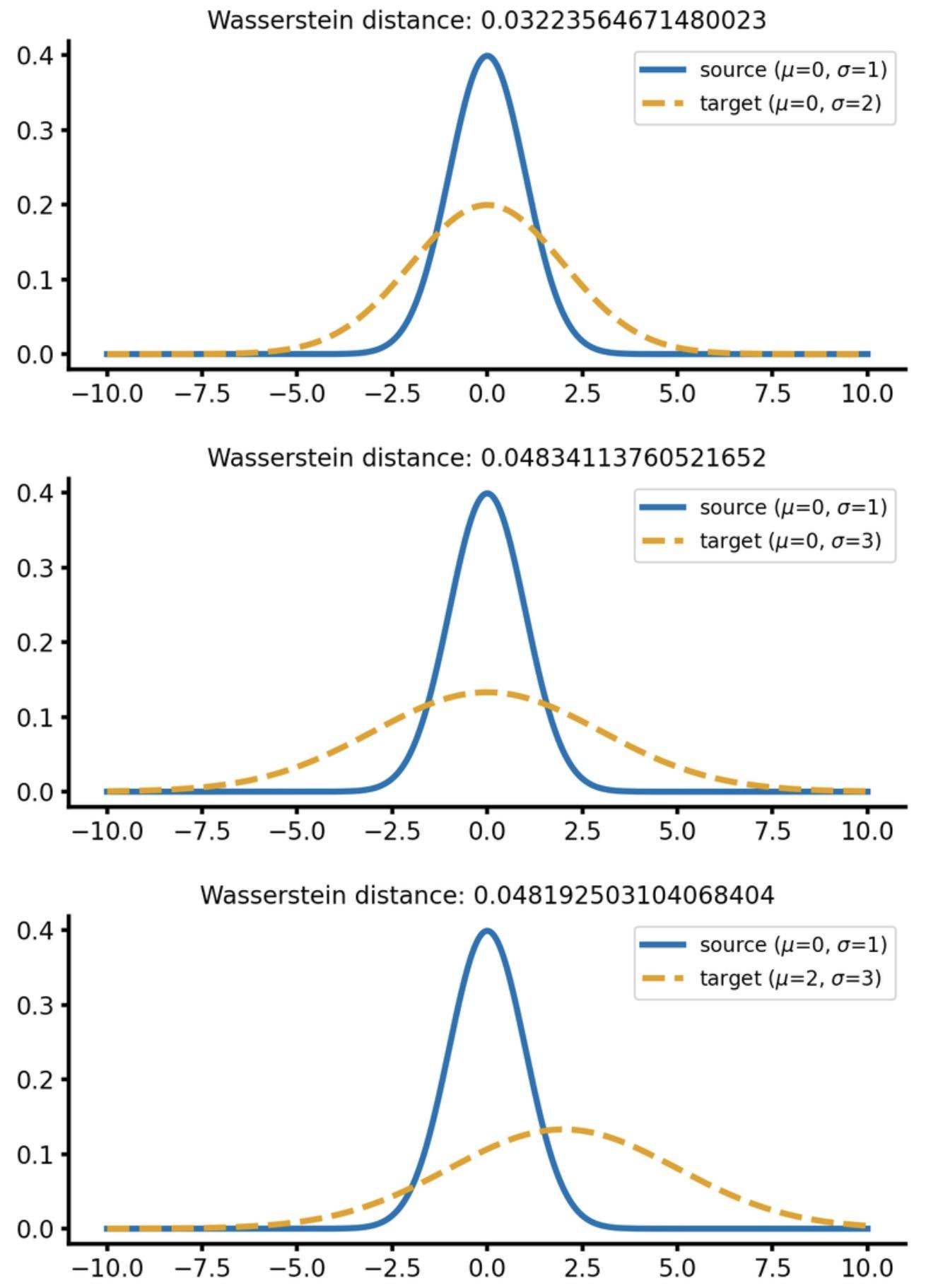

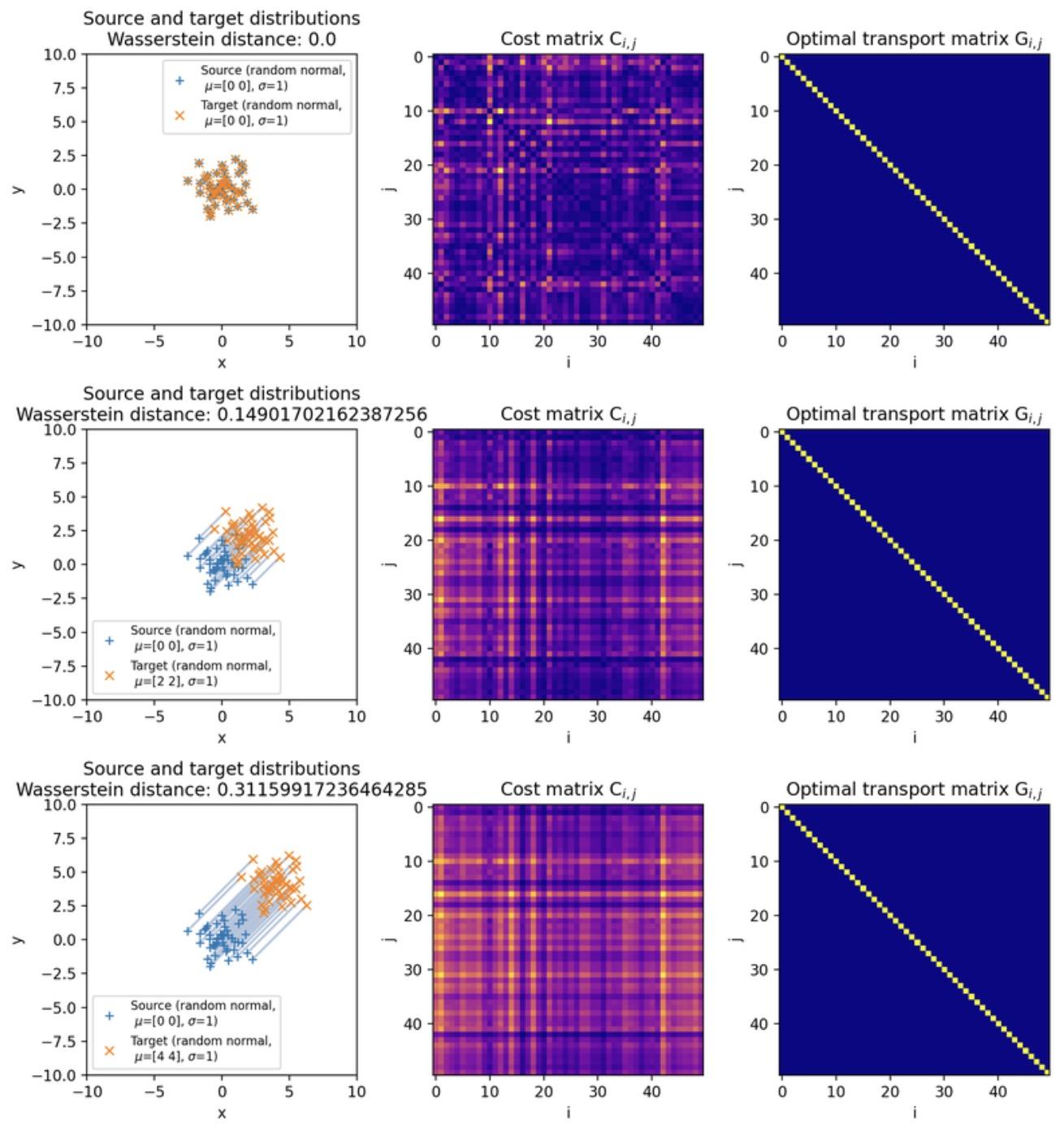

I actually wrote a short introduction to #WassersteinDistance and #OptimalTransport some time ago, if you’re looking for a more intuitive entry point:

🌍 https://www.fabriziomusacchio.com/blog/2023-07-23-wasserstein_distance/

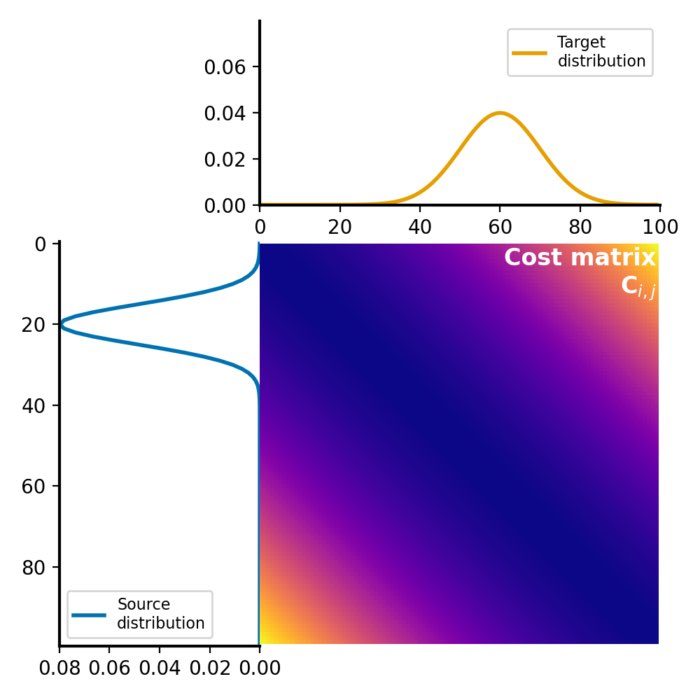

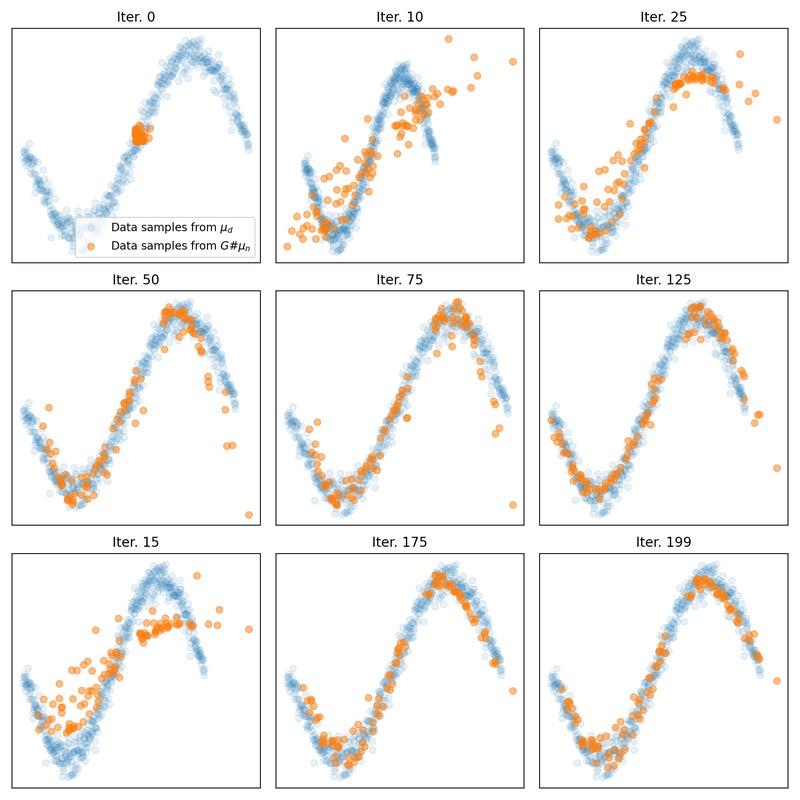

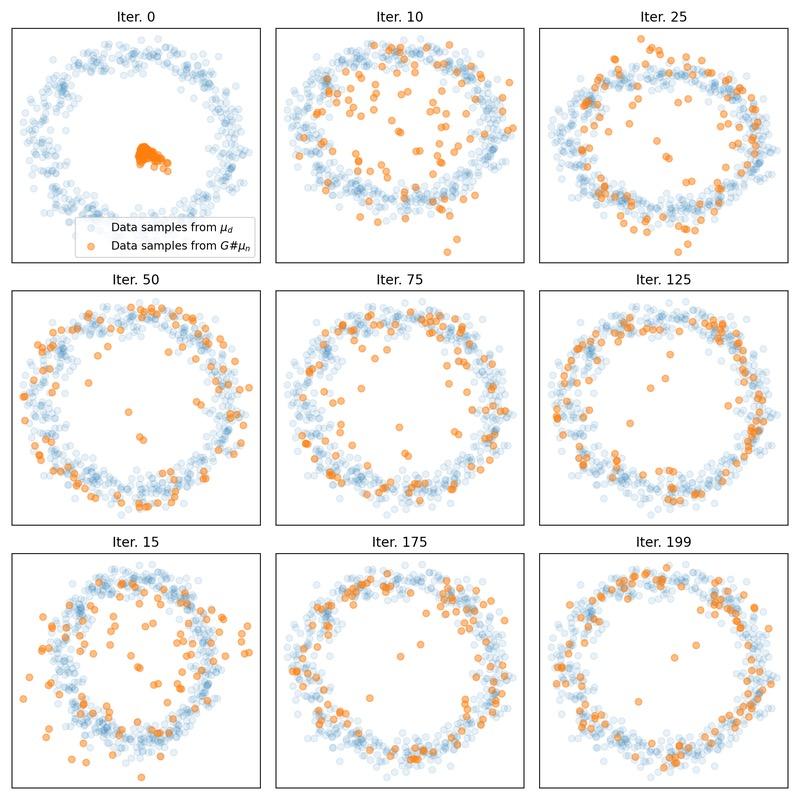

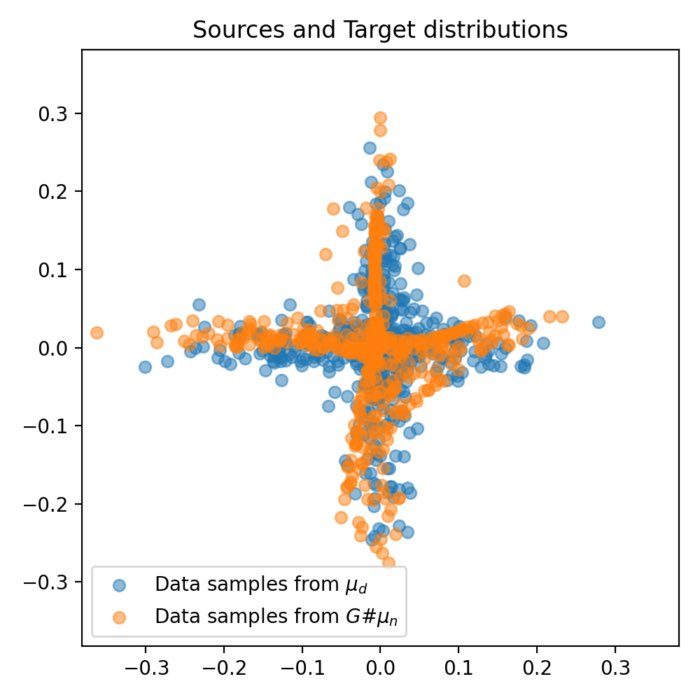

Following up on this, I also explored a more direct use of #WassersteinDistance in #WGANs: Instead of training a discriminator, the generator is optimized by explicitly computing the #OptimalTransport distance between real and generated samples. This turns the loss into the actual metric of interest and removes the adversarial setup, leading to a more direct and stable training signal. And we can generate cool animations, too ^_^

🌍 https://www.fabriziomusacchio.com/blog/2023-07-30-wgan_with_direct_wasserstein_distance/

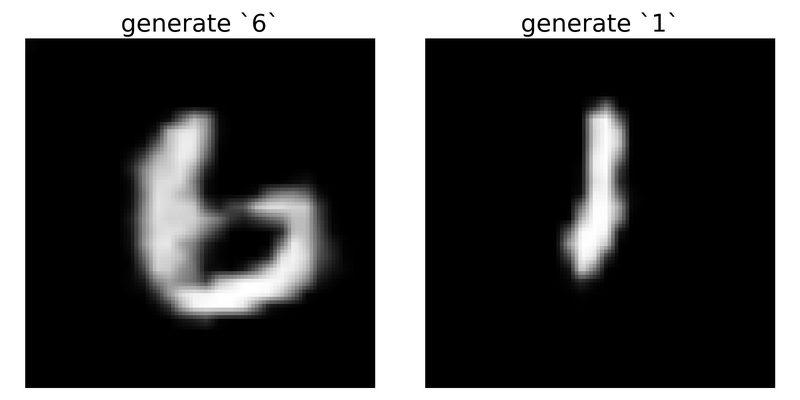

Taking this one step further, I also looked at #ConditionalGANs: Extending #GANs by conditioning both generator and discriminator on labels, so you can explicitly control what is generated. In the #MNIST case, this means generating specific digits and even smoothly interpolating between them:

🌍 https://www.fabriziomusacchio.com/blog/2023-07-30-cgan/

#MachineLearning #GenerativeAI #CGAN #GAN

(The attached GIF shows the interpolation between the digits 6 and 1)

… okay, I didn't expect Mastodon to speed up the playback of attached GIFs 🙈

@FabMusacchio Warp GIF, energise!

They present their work on #AISTATS2026 and they posted their poster in bluesky: https://bsky.app/profile/clement-bonet.bsky.social/post/3mkvgyvjhtk2w