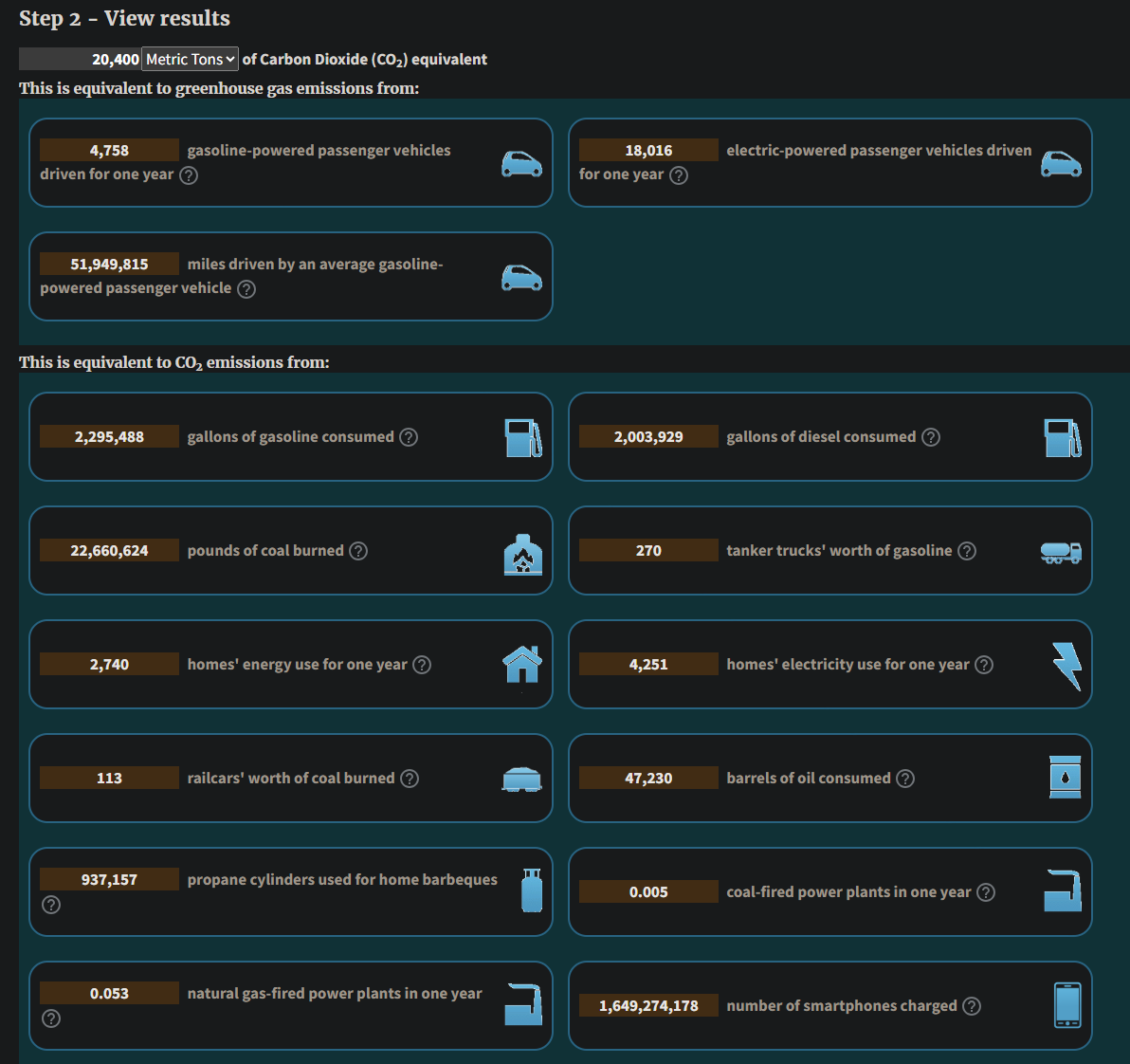

Coming at this another way: MIT cites 50 GWh for training GPT-4. Depending on US zip code, that's going to be somewhere between 11-20 metric tons of CO2 equivalence. That's 1000x less than the cited Mistral number.

So we have to conclude that training is bad, but given current usage rates, inference will blow away that number in short order, so don't let anyone cite per-inference costs as a stat in LLMs' favor.

https://www.technologyreview.com/2025/05/20/1116327/ai-energy-usage-climate-footprint-big-tech/