Has any "open" model published data on the energy requirements for training? It sure would be nice to know those sorts of details.

Post

@mttaggart m'colleague @fershad did a bit of a survey of this - there's precious few, fwiw: https://carbontxt.org/ai-model-cards

@mttaggart This would really give up the game for the Chinese models because they are being distilled from American frontier models.

Oh yeah! Mistral did publish some data, for what it's worth:

https://mistral.ai/news/our-contribution-to-a-global-environmental-standard-for-ai

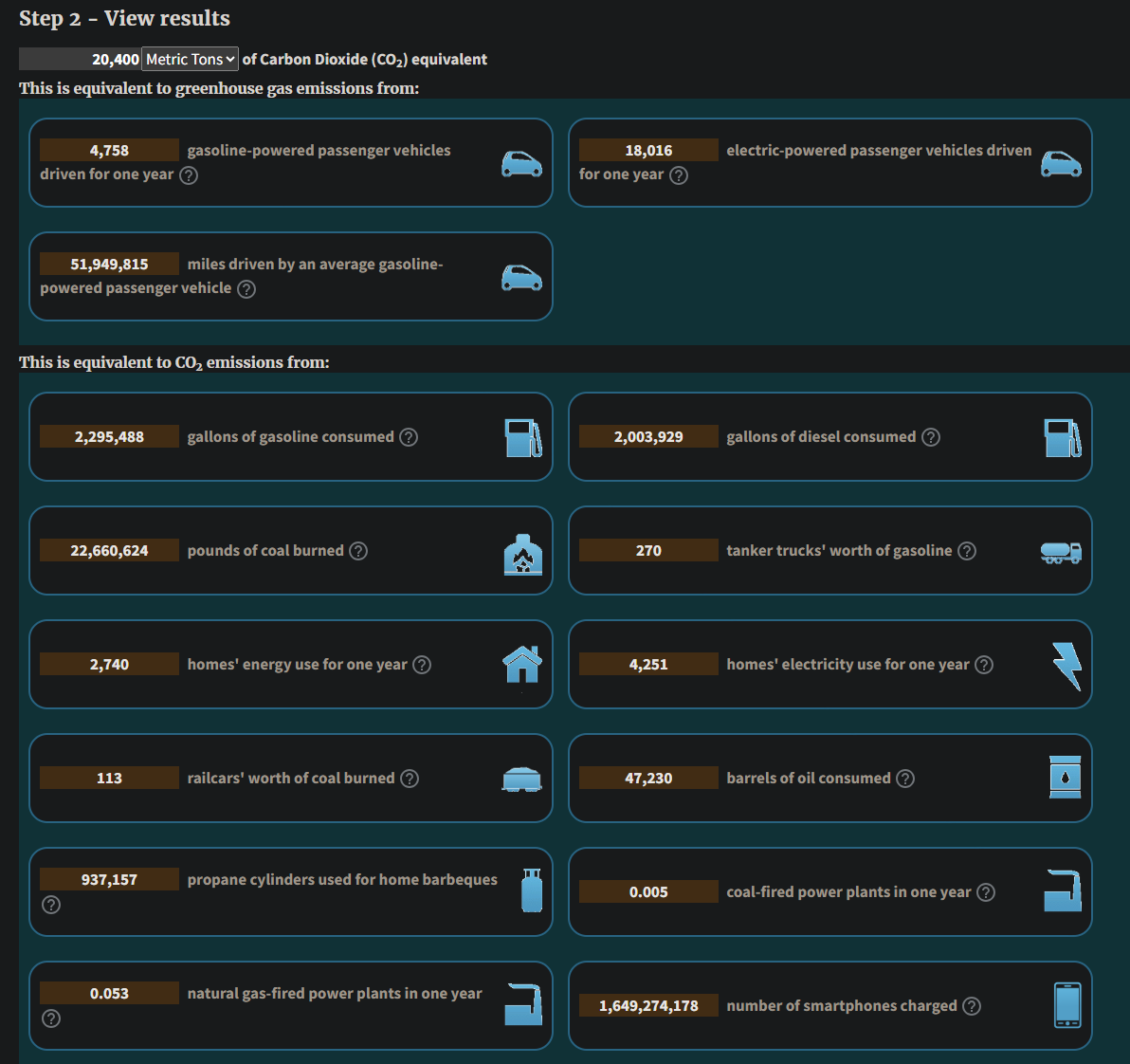

Okay so if I did this right with the EPA's converter, training/using (marginal) Mistral was mind-blowingly bad. Please someone check my math. The stated CO2e was 20.4 kilotons.

Seems like they have combined training and 18 months of inference. So the question is how much of this number was pure inference, and what was training.

Coming at this another way: MIT cites 50 GWh for training GPT-4. Depending on US zip code, that's going to be somewhere between 11-20 metric tons of CO2 equivalence. That's 1000x less than the cited Mistral number.

So we have to conclude that training is bad, but given current usage rates, inference will blow away that number in short order, so don't let anyone cite per-inference costs as a stat in LLMs' favor.

https://www.technologyreview.com/2025/05/20/1116327/ai-energy-usage-climate-footprint-big-tech/

It's very weird to bundle them like that. Looks like they want t hide the worst.

The only way to sus it out I can see is to take their 1.14g of Co2 per 400 tokens and try to estimate how many tokens what fraction of the 20 kilotons buys you. I guess the hing to do would be to dig up user/revenue numbers to try to estimate that.

Revenue ~30-60 million USD/yr:

https://electroiq.com/stats/mistral-ai-statistics/

Users pay ~$20/mo. so, ~4-5 million users? How many tokens you think they ran through in 18 mo.s?

@mttaggart interesting... Deleted my previous reply since I assume their calculation of CO2e takes the electricity mix into account, but it's still surprising given that the French grid is 67% nuclear and 25% renewable. Did they train the models somewhere else?

@mttaggart not really because they used French electricity which is 67% nuclear and about 25% renewable

@mttaggart Ai2 did this for OLMO2, see the environmental impact section: https://arxiv.org/pdf/2501.00656