We'll see how I feel in the morning, but for now i seem to have convinced myself to actually read that fuckin anthropic paper

I just

I'm not actually in the habit of reading academic research papers like this. Is it normal to begin these things by confidently asserting your priors as fact, unsupported by anything in the study?

I suppose I should do the same, because there's no way it's not going to inform my read on this

@jenniferplusplus it's not a great lit review/paper in terms of connecting to broader literature; that is however typical for software research (not for more empirical fields like psychology imho)

@jenniferplusplus no, usually academic studies have a null hypothesis of "the effect we're trying to study does not exist" and are required to provide evidence sufficient to reject that hypothesis

"AI" is not actually a technology, in the way people would commonly understand that term.

If you're feeling extremely generous, you could say that AI is a marketing term for a loose and shifting bundle of technologies that have specific useful applications.

I am not feeling so generous.

AI is a technocratic political project for the purpose of industrializing knowledge work. The details of how it works are a distant secondary concern to the effect it has, which is to enclose and capture all knowledge work and make it dependent on capital.

@jenniferplusplus

bookmarked for future reference, boosting is not enough

@jenniferplusplus How about not just capital, but also permission?

Imagine a world in which "AI" is actually successful: it is widely, maybe even largely universally, adopted, and it actually works to deliver on its promises. (I *said* "imagine"! Bear with me.) In such a world, what happens to someone (person, company, country, whatever slicing you want to look at) who is *denied access to* this technology for whatever reason?

The power held by those in control of allowing access to that tech…

@mkj Yeah, same thing. You can't use industrial machines without the permission of the owner.

So, back to the paper.

"How AI Impacts Skill Formation"

https://arxiv.org/abs/2601.20245

The very first sentence of the abstract:

> AI assistance produces significant productivity gains across professional domains, particularly for novice workers.

1. The evidence for this is mixed, and the effect is small.

2. That's not even the purpose of this study. The design of the study doesn't support drawing conclusions in this area.

Of course, the authors will repeat this claim frequently. Which brings us back to MY priors, which is that this is largely a political document.

@jenniferplusplus It's less a claim and more an intentionally-unsubstantiated background premise which the supposed research will treat as an assumed truth.

@dalias Honestly, yes. I suspect the purpose of this paper is to reinforce that production is a correct and necessary factor to consider when making decisions about AI.

And secondarily, I suspect it's establishing justification for blaming workers for undesirable outcomes; it's our fault for choosing to learn badly.

And now for a short break

I have eaten. I may be _slightly_ less cranky.

Ok! The results section! For the paper "How AI Impacts Skill Formation"

> we design a coding task and evaluation around a relatively new asynchronous Python library and conduct randomized experiments to understand the impact

of AI assistance on task completion time and skill development

...

Task completion time. Right. So, unless the difference is large enough that it could change whether or not people can learn things at all in a given practice or instructional period, I don't know why we're concerned with task completion time.

Well, I mean, I have a theory. It's because "AI makes you more productive" is the central justification behind the political project, and this is largely a political document.

@jenniferplusplus you have inspired me to read it as well (over beer and pizza) and .. yeah, what she said. I think i gave up before the results section. i did feel that the prep-work to calibrate the experiment (e.g the local item dependence in the quiz) was pretty well done, but i will defer to any sociologist who says otherwise.

Why is all the so-called productivity in the paper at all?

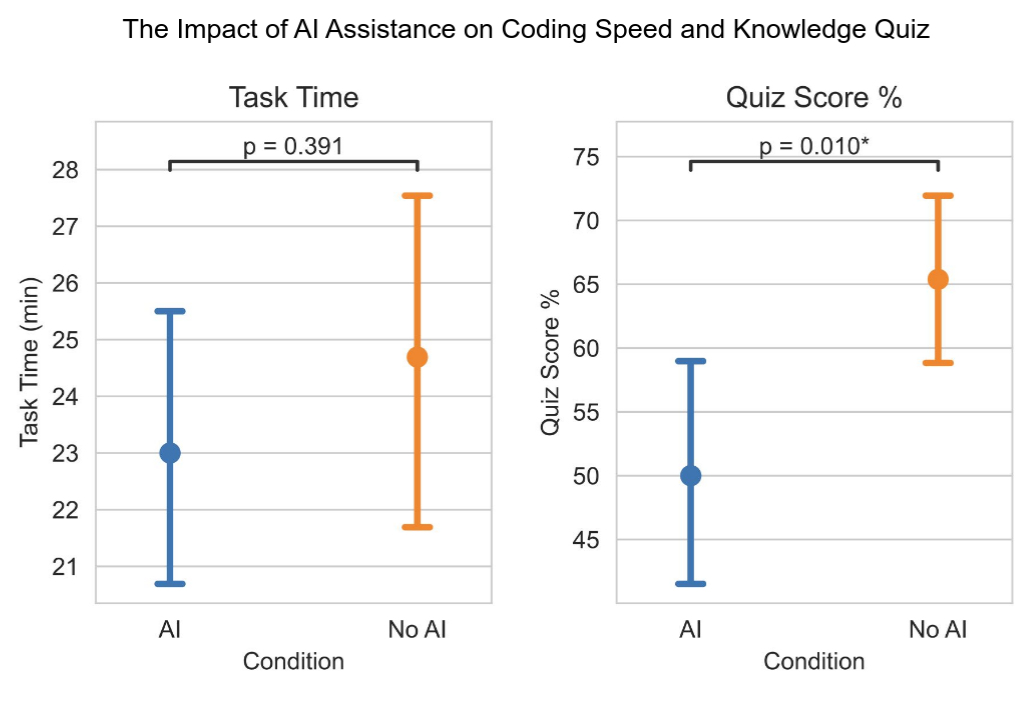

> We find that using AI assistance to complete

tasks that involve this new library resulted in a reduction in the evaluation score by 17% or two grade

points (Cohen’s d = 0.738, p = 0.010). Meanwhile, we did not find a statistically significant acceleration in

completion time with AI assistance.

I mean, that's an enormous effect. I'm very interested in the methods section, now.

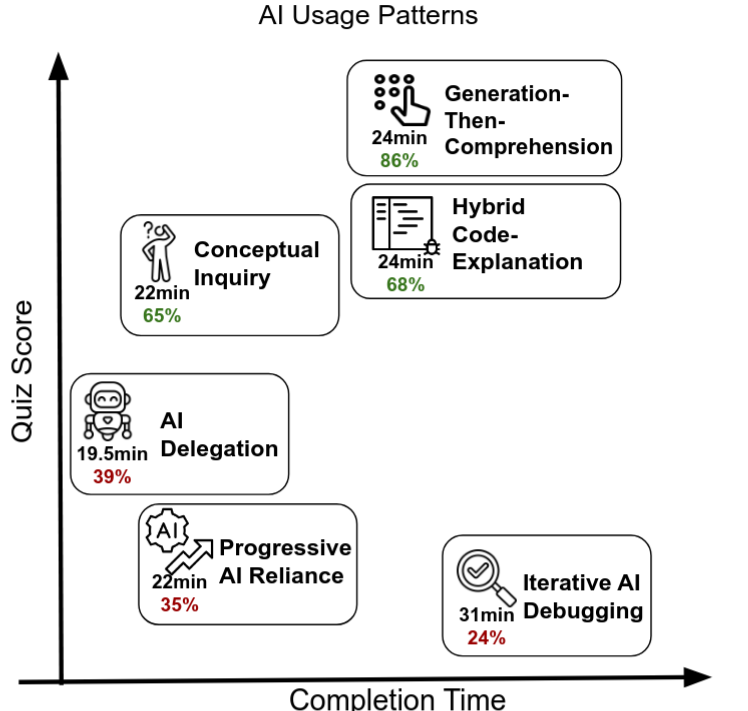

> Through an in-depth qualitative analysis where we watch the screen recordings of every participant in our

main study, we explain the lack of AI productivity improvement through the additional time some participants

invested in interacting with the AI assistant.

...

Is this about learning, or is it about productivity!? God.

> We attribute the gains in skill development of the control group to the process of encountering and subsequently resolving errors independently

Hm. Learning with instruction is generally more effective than learning through struggle. A surface level read would suggest that the stochastic chatbot actually has a counter-instructional effect. But again, we'll see what the methods actually are.

Edit: I should say, doing things with feedback from an instructor generally has better learning outcomes than doing things in isolation. I phrased that badly.

They reference these figures a lot, so I'll make sure to include them here.

> Figure 1: Overview of results: (Left) We find a significant decrease in library-specific skills (conceptual

understanding, code reading, and debugging) among workers using AI assistance for completing tasks with a

new python library. (Right) We categorize AI usage patterns and found three high skill development patterns

where participants stay cognitively engaged when using AI assistance

@jenniferplusplus I like the fact that their own research doesn't fit their lazy claim you reference, and they spend a lot of time trying to work out how the claim can be true, even though their own evidence is against it (and more in line with the mixed evidence in the literature, as you say).

@jenniferplusplus No it is not. That kind of thing is left to the realm of "self-publishing". Was this thing peer reviewed?

@seanwbruno It is not. https://arxiv.org/abs/2601.20245

@jenniferplusplus You have entirely more stamina than I have. I just read the first sentence of the abstract and emitted a guffaw and exclaimed, out loud for the spouse to hear, "Citation needed!".