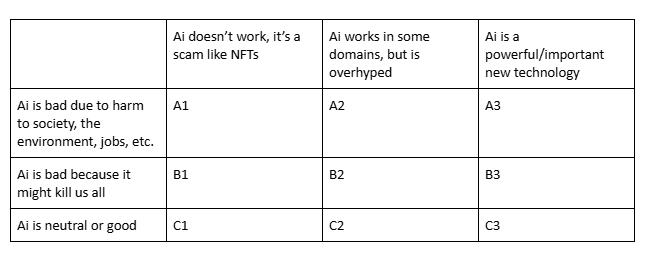

Which is closest to your view?

Post

@ZachWeinersmith I'm one of the lonely C2s.

It's like a lot of things. The technology itself could be grounded in a completely legal and moral landscape. We could imagine a moderately sized data farm powered by solar energy and consuming only public domain, open source, and creative common works for training.

If it's not being built that way, it's because society, not the technology, made some bad choices.

A2, being mostly harmful to society in myriad ways, while being partially functional in narrow use cases for some domains.

@ZachWeinersmith A3, but why is this not a poll?

Also, is this basically an AD&D alignment chart?

@ZachWeinersmith I'm usually C3 with caveats about capital concentration (what do you mean you are an "AI first" company but you don't run your own models?).

However, having had peculiar experiences with AI psychos in managerial positions, I sometimes land on A3...

I also feel like I lack the theoretical framework to evaluate the impact (in software engineering). E.g.

1. It's nice to ask Claude what the syntax is for the git command I forgot, but is it worth it having it run it for me without my knowledge?

2. The steps we take to reduce context windows for humans are likely good for LLMs too. How do we measure human context windows?

3. If a procedure can be described to the LLM and saved as a "skill", isn't it better to make it into a deterministic script?

4. Eventually the uncertainty/error rates of a natural language processor will be extremely low -- will they ever be lower than the bugs introduced by a deterministic procedure?

5. If you need to add more and more details to get the LLM to do what you want, how is that different from writing lower level code?

If anyone has something related I'd love to read it.

@ZachWeinersmith Yet another A2 here.

@ZachWeinersmith C2 I guess, but only in an abstract sense. I can use AI for good, but most uses are bad.

@ZachWeinersmith A1, with a touch of A2 for very specific use cases.

@ZachWeinersmith A2, A1 when I'm feeling particularly jaded or grumpy

@ZachWeinersmith A1, because "artificial intelligence" isn't a thing. Machine learning, including LLMs, solidly A2.

@ZachWeinersmith solidly A1

It's destroying people's critical thinking skills, in some cases causing irreversible harm (death), and has no real-world value.

The amount of times I've seen people ask AI a question, then have to check it was right - meaning it would have taken the same or less time to just figure it out from the jump? Bananas.

I'm also so sick of jobs that will get zero benefit from AI having to find a way to integrate it to please leadership.

Fuck AI.