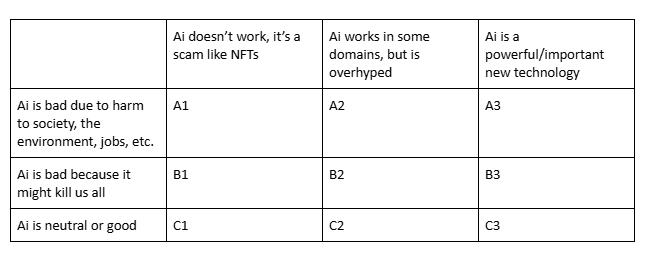

Which is closest to your view?

Post

@ZachWeinersmith A2+C2. I eliminate A3 and C3 primarily because it is not a technology, nor is AI a coherent set of technologies, and it is as much a set of competing political enterprises as it is about technology

@ZachWeinersmith It depends on.

Generative AI? A2.

AI in general? B3 to C3.

@ZachWeinersmith A2. Leans slightly to C2 though, the usage of AI is bad because it's overhyped and is now taking jobs that should not be replaced, but it's debatable whether to attribute it entirely on the technology itself, which is immature (though it just makes AI "neutral" at most, not "good").

@ZachWeinersmith Somewhere between A1 and A2, because where it “works” and it’s useful it still needs to be babysat because of how often it’s wrong, and where it doesn’t need to be attended because of the allowed tolerances, it’s mostly used for fraud.

@ZachWeinersmith If you're in row A or B (and I am), aren't the columns just an irrelevant distraction?

@ZachWeinersmith A2 except for Anthropic and OpenAI which are A12

@ZachWeinersmith Like everyone assuming "AI" means "generative AI".

I thought of saying A2, but I'm going A1. It's closer to the truth.

What the AI companies are doing is a scam. They don't care what the tech is good for, only what they can make people think it's good for. They are pushing the tech to be used as an unscoped technology, "the everything machine", to be used as much as possible in all areas, both where it does good and where it does immense harm.

It looks like it's good at a few things (e.g. bug hunting) but that's incidental. Their effort goes into making generative AI appear to be good at things it is not.

@ZachWeinersmith A2. Especially insofar as AI actually has a meaning (to me and the rest of the olds) beyond just LLMs & their image and video analogues.

@ZachWeinersmith @iris_meredith

A2.

As a writer who has always played with tech, GPT2 was so much fun because it was weird and stupid. Models today are still nonsense (and can be fun for play) but have dangerous hyper-realistic human-shaped masks grafted on.

@ZachWeinersmith If we mean "AI" in the sense of the dominant discourse, A1. If we mean AI in the historical sense, C2 or C3.

Depends on what you mean by “AI”.

Assuming we’re talking about generative “AI” like LLMs and diffusion models, A2.

…with a caveat that few of the “good” use cases, if any, are really all that good, and most will likely get rapidly less good as major “AI” companies start charging closer to true costs for the services.

@ZachWeinersmith I bet you'd get very different answers if you asked about "works in my area of expertise" rather than "works" generally

@ZachWeinersmith Multiclassing all As and all Bs, somehow. #lang_en

@ZachWeinersmith depends on what you mean by AI:

If it's what the tech industry is trying to sell - A1.

If it's the specific technology behind what they're trying to sell - A2.

If it's machine learning in general, including LLMs (just not at the scale of the current models), then C3.

@ZachWeinersmith A mix of A1 & A2.

@ZachWeinersmith LLMs? Firmly in the A1 category. The "stochastic" part is what makes them seem like AI, but it's also what makes them a fraud. Randomness is what fools people and is also likely a big contributer to their worst fabrications.

Also they are practically weapons-grade tools to make code harder to maintain because they fill it with random but convincing nonsense that does nothing, but seems to be functional.

@ZachWeinersmith GenAI especially LLMs: A1 (and "etc" is doing a lot of work....)

@ZachWeinersmith A2 but with moments of A1

@ZachWeinersmith A2 for LLMs. summarization for non critical info can pretty useful, but not worth setting the world on fire for.

other kinds of AI can have impressive performance (image recognition, for one), although of course uses can be ethical or unethical.

@ZachWeinersmith

A1 or A2, depending on your definition of “AI"

@ZachWeinersmith Deep learning in general can be very useful. LLMs for writing software are pretty good at getting from "I only have a specification" to "I have the skeleton of an application that needs to be fleshed out by an engineer" and are unfortunately good at creating mountains of technical debt. LLMs as search engines can turn up useful references but the summaries cannot be trusted. LLMs as genies fulfilling wishes are a bad idea.

@ZachWeinersmith currently A2, expecting that to evolve into C3 in the distant future provided we can do something about tech bros, billionaires and rampant capitalism.

@ZachWeinersmith Surprisingly, I chose C3 based on the chosen titles. It's way overhyped tight now, but in the end it's corporations and people that suck.

@ZachWeinersmith

A1.2 if you're talking about LLMs

Which is basically what the vast majority of people are being sold as AI

@ZachWeinersmith A2-ish. Some AI works in some domains. The generative chatbot stuff is hard A1.

@ZachWeinersmith None of them. Mostly because I doubt that you are using a definition of “AI” that matches the one in use for nearly 70 years and I reject the “modern” redefinition.

If you had written “LLMs” instead of “AI” then your categories might have been relevant.