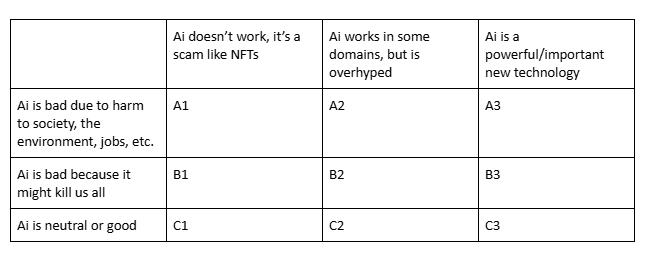

Which is closest to your view?

Post

@ZachWeinersmith LLMs? Firmly in the A1 category. The "stochastic" part is what makes them seem like AI, but it's also what makes them a fraud. Randomness is what fools people and is also likely a big contributer to their worst fabrications.

Also they are practically weapons-grade tools to make code harder to maintain because they fill it with random but convincing nonsense that does nothing, but seems to be functional.

@ZachWeinersmith GenAI especially LLMs: A1 (and "etc" is doing a lot of work....)

@ZachWeinersmith A2 but with moments of A1

@ZachWeinersmith A2 for LLMs. summarization for non critical info can pretty useful, but not worth setting the world on fire for.

other kinds of AI can have impressive performance (image recognition, for one), although of course uses can be ethical or unethical.

@ZachWeinersmith

A1 or A2, depending on your definition of “AI"

@ZachWeinersmith Deep learning in general can be very useful. LLMs for writing software are pretty good at getting from "I only have a specification" to "I have the skeleton of an application that needs to be fleshed out by an engineer" and are unfortunately good at creating mountains of technical debt. LLMs as search engines can turn up useful references but the summaries cannot be trusted. LLMs as genies fulfilling wishes are a bad idea.

@ZachWeinersmith currently A2, expecting that to evolve into C3 in the distant future provided we can do something about tech bros, billionaires and rampant capitalism.

@ZachWeinersmith Surprisingly, I chose C3 based on the chosen titles. It's way overhyped tight now, but in the end it's corporations and people that suck.

@ZachWeinersmith

A1.2 if you're talking about LLMs

Which is basically what the vast majority of people are being sold as AI