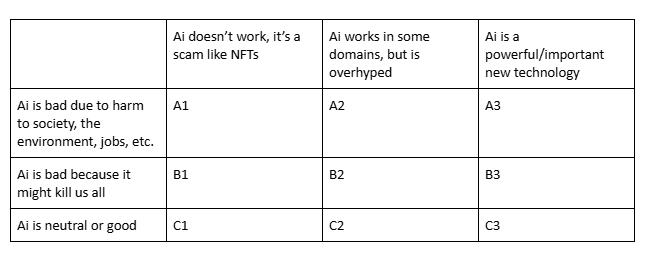

Which is closest to your view?

Post

@ZachWeinersmith

A2, leaning C2 (depending on what kind of AI and how it is used).

A1 for the things currently being hyped as "AI".

C2 for the very different things that get conflated with them.

@ZachWeinersmith I’ll join the A2 crowd, with the proviso that much of the harm to society is a direct result of the excessive hype

@ZachWeinersmith

A2

I don't trust the men having control over it.

I hope it will die financially without making too much damages but I think we are already over a 2008 crisis magnitude.

@ZachWeinersmith

A2, but only because a friend pays the bills by training them and finding uses for them in the process

@ZachWeinersmith A1 with an option on A2 - I can't say for sure if there are workable situations because of the hype. I suspect there may be some. But also don't think the positives of any workable situation would outweigh the ethical, societal, and environmental negatives.

@ZachWeinersmith A1.

If you ignore cost/benefit, I can concede A2 as in there are narrow things it can do well but it will be prohibitibely expensive to use LLMs only in the niche applications they can work well, once we lose the VC expectation that AI will replace half the workforce and only a few select industies have to shoulder the entire bill of the thing. Hence A1 factoring in the cost.

@ZachWeinersmith I'm one of the lonely C2s.

It's like a lot of things. The technology itself could be grounded in a completely legal and moral landscape. We could imagine a moderately sized data farm powered by solar energy and consuming only public domain, open source, and creative common works for training.

If it's not being built that way, it's because society, not the technology, made some bad choices.

A2, being mostly harmful to society in myriad ways, while being partially functional in narrow use cases for some domains.

@ZachWeinersmith A3, but why is this not a poll?

Also, is this basically an AD&D alignment chart?

@ZachWeinersmith I'm usually C3 with caveats about capital concentration (what do you mean you are an "AI first" company but you don't run your own models?).

However, having had peculiar experiences with AI psychos in managerial positions, I sometimes land on A3...

I also feel like I lack the theoretical framework to evaluate the impact (in software engineering). E.g.

1. It's nice to ask Claude what the syntax is for the git command I forgot, but is it worth it having it run it for me without my knowledge?

2. The steps we take to reduce context windows for humans are likely good for LLMs too. How do we measure human context windows?

3. If a procedure can be described to the LLM and saved as a "skill", isn't it better to make it into a deterministic script?

4. Eventually the uncertainty/error rates of a natural language processor will be extremely low -- will they ever be lower than the bugs introduced by a deterministic procedure?

5. If you need to add more and more details to get the LLM to do what you want, how is that different from writing lower level code?

If anyone has something related I'd love to read it.