@cstross Is this article ai?

Post

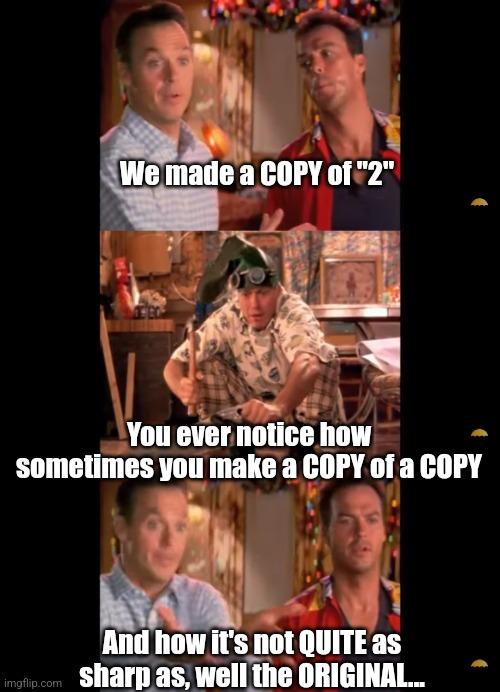

@cstross @Furthering I've also noticed that it is repetitive. It'll sometimes say the same thing in at least two ways, sometimes in the same paragraph. This is especially noticeable in video narration generated by AI when part of the goal is to pad out the length of the video.

I see a lot of independent authors using machine translation for international markets.

While this might be initially cheaper, I suspect this will do them no favor in the long run.

@cstross I am sitting in a room different from the one you are in now. I am recording the sound of my speaking voice and I am going to play it back into the room again and again until the resonant frequencies of the room reinforce themselves...

@cstross hmmm, that might also explain why AI seems more effective for code.

For the most part you want a reversion to the mean in code. Novel solutions are only needed at the cutting edge where you trying to make the computer do something that’s not been done before.

@Jmj Yes. Also I suspect the semantic expressiveness of programming languages is far narrower than that of human languages: they're more precise, but it's much harder (though not impossible!) to write poetry in them. So there's less risk of losing something unique by generating output that tends to occupy the middle of the bell curve.

@cstross ironically got a Google cloud genAI and ML ad right in the middle of that.

@cstross No surprise that we see the textual equivalent of mad cow disease.

@cstross it's the textual equivalent of prions

@cstross I've previously described LLM-generated text as reading like "a middle management memo that no-one bothers reading". 🤷🏻♂️

@cstross by putting a measurable number on this feature, you have now made it possible to train out!

If you use an LLM to make “objective” decisions or treat it like a reliable partner, you’re almost inevitably stepping into a script that you did not consent to: the optimized, legible, rational agent who behaves in ways that are easy to narrate and evaluate. If you step outside of that script, you can only be framed as incoherent.

That style can masquerade as truth because humans are pattern-matchers: we often read smoothness as competence and friction as failure. But rupture in the form of contradiction, uncertainty, “I don’t know yet,” or grief that doesn’t resolve is often is the truthful shape of truth.

AI is part of the apparatus that makes truth feel like an aesthetic choice instead of a rupture. That optimization function operates as capture because it encourages you to keep talking to the AI in its format, where pain becomes language and language becomes manageable.

The only solution is to refuse legibility.

It's already beginning, where people speak the same words as always, but they don't mean the same things anymore from person to person.

New information from feedback that doesn't fit another's collapsed constraints for abstraction... can only be perceived as a threat. Because If you demand truth from a system whose objective is stability under stress, it will treat truth as destabilizing noise.

Reality is what makes a claim expensive. A model tries to make a claim cheap.

Systems that treat closure as safety will converge to smooth, repeatable outputs that erase the remainder. A useful intervention is one that increases the observer’s ability to detect and resist premature convergence—by exposing the hidden cost of smoothness and reinstating a legitimate place for uncertainty, contradiction, and falsifiability. But the intervention only remains non-doctrinal if it produces discriminative practice, not portable slogans.